The current discourse regarding an artificial intelligence bubble focuses disproportionately on stock market volatility while ignoring the underlying divergence between infrastructure investment and unit economic realization. To determine if a sector is in a bubble, one must measure the delta between speculative capital expenditure (CapEx) and marginal utility generation. If the cost to produce an automated intelligence unit exceeds the value that unit extracts from a workflow, the system is fundamentally insolvent regardless of share price.

The stability of the AI sector rests on three structural pillars: the hardware-to-revenue lag, the saturation of linguistic data, and the transition from architectural scaling to algorithmic efficiency.

The Hardware Revenue Lag and the CapEx Trap

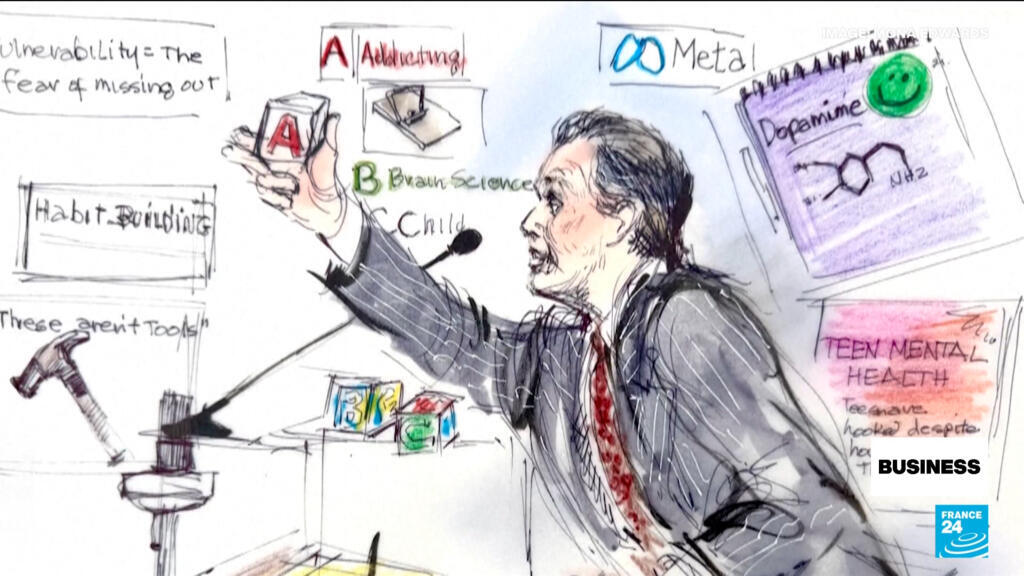

Hyperscale cloud providers and enterprise entities are currently locked in a prisoner’s dilemma. The "Fear Of Missing Out" (FOMO) has been replaced by a "Fear Of Being Left Behind" (FOBLB), forcing massive investment in H100 and B200 GPU clusters. This creates a deceptive growth signal. When a company like NVIDIA reports record earnings, it reflects the input costs of the industry, not the output value.

The primary risk is the duration of the lag between hardware acquisition and software monetization. In previous technological cycles—specifically the fiber-optic buildout of the late 1990s—the infrastructure was laid years before the applications (streaming, cloud computing, mobile web) existed to utilize it. We are seeing a parallel "over-provisioning" phase.

- Phase 1: Infrastructure Build (Current): High demand for compute, energy, and data centers.

- Phase 2: Capability Overhang: Model capabilities exist, but enterprise integration is stalled by security, latency, and "hallucination" risks.

- Phase 3: The Rationalization: Capital dries up for firms that cannot turn a $1 token input into a $2 productivity output.

The "bubble" is not characterized by the existence of the technology, but by the mispricing of its immediate deployment velocity.

The Law of Diminishing Returns in Model Scaling

For the past five years, the industry has operated under Scaling Laws, which posit that increasing compute power and data volume leads to predictable increases in model performance. This linear relationship is hitting a physical and economic ceiling.

The Data Exhaustion Problem

Large Language Models (LLMs) have consumed nearly the entire repository of high-quality, human-generated public text. To continue scaling, labs are turning to synthetic data—data generated by other AI. This introduces the risk of "Model Collapse," where errors and biases in the first generation are amplified in subsequent generations, leading to a degradation of the statistical distribution of the model.

The Energy Constraint

The power density required for next-generation training clusters is outstripping the capacity of aging electrical grids. A cluster of 100,000 GPUs requires the power equivalent of a mid-sized city. The cost of intelligence is now tethered to the spot price of electricity and the regulatory speed of nuclear or natural gas permitting. This creates a hard floor on how cheap "intelligence" can become, potentially preventing the hyper-deflationary environment optimists predict.

Quantifying the Value Proposition: LLMs as Loss Leaders

Current enterprise AI adoption is largely performative or experimental. To move from a "bubble" to a "backbone," AI must solve the High-Stakes Accuracy Constraint.

In a low-stakes environment (e.g., writing a marketing email), a 90% accuracy rate is acceptable. In a high-stakes environment (e.g., automated legal discovery, medical diagnostics, or real-time supply chain adjustment), 90% is a failure. The cost to bridge the gap from 90% to 99.9% accuracy is non-linear; it requires an exponential increase in verification layers, human-in-the-loop oversight, and specialized fine-tuning.

- The Productivity Paradox: While AI can draft code 50% faster, the time spent debugging AI-generated code can offset those gains.

- The Integration Debt: Replacing a legacy human workflow with an AI workflow requires a complete restructuring of data architecture, which often costs 5x more than the AI software license itself.

The market is currently valuing AI software as a high-margin SaaS product, but it currently functions more like a low-margin professional services business due to the heavy customization required for each enterprise use case.

Displaced vs. Augmented Labor Economics

The narrative that AI will cause immediate mass unemployment ignores the "Jevons Paradox." This economic theory suggests that as a resource becomes more efficient to use, the total consumption of that resource increases rather than decreases.

If AI makes a software engineer 2x more productive, the demand for software does not stay static; it increases because software becomes cheaper to produce. However, this only holds if the quality of the output remains high. If the market is flooded with low-quality, AI-generated "slop," the value of the output collapses, leading to a deflationary spiral where no one makes a profit—the "Commoditization Trap."

The Transition to Agentic Systems

The next structural shift that will determine the longevity of the AI investment cycle is the move from Chat-based interfaces to Agentic systems.

Chatbots are passive; they wait for a prompt. Agents are active; they are given a goal and execute a series of steps across different software environments to achieve it. The economic value of an agent is significantly higher because it replaces a "process" rather than a "task."

The viability of this shift depends on solving the "brittleness" of current models. If an agent encounters an edge case it wasn't trained for, it can enter an infinite loop or execute a catastrophic error in a live production environment. Until there is a breakthrough in Symbolic Reasoning—the ability for AI to understand logic and rules rather than just predicting the next word—agents will remain confined to "sandboxed" environments.

Strategic Position and Survival Mechanics

To navigate the current valuation cycle, organizations must pivot from generalized AI deployment to specialized vertical stacks. The companies that survived the 2000 dot-com crash were those with a "moat" built on proprietary data or physical distribution. In the AI era, the "moat" is not the model (which is rapidly being commoditized by open-source alternatives like Llama), but the proprietary feedback loop.

The Vertical Integration Play

General-purpose models are becoming a utility, similar to electricity or internet access. Profitability will migrate to the edges:

- Custom Silicon: Designing chips for specific inference tasks to bypass the NVIDIA premium.

- Proprietary Data Moats: Companies with non-public, high-value datasets (e.g., medical records, specialized engineering schematics) that cannot be scraped by general crawlers.

- The Last-Mile Verification: Specialized firms that provide the "trust layer" to ensure AI outputs are safe and accurate.

The "bubble" will burst for companies that are merely "wrappers" around third-party APIs with no unique data or workflow integration. The survivors will be those who treat AI as a component of a larger system rather than the product itself.

The Forecast for Capital Realignment

Expect a "Great Shakeout" in the next 18–24 months. This will not be a total collapse of the sector, but a violent rotation of capital. Funding will migrate away from "Foundation Model" startups—which require billions in CapEx just to stay relevant—and toward "Application-Specific" AI that demonstrates a clear Reduction in Force (RIF) or a measurable increase in Top-Line Revenue.

The primary metric for the next phase of the AI economy is Return on Compute (ROC). If a company cannot define its ROC with the same precision as Return on Invested Capital (ROIC), it is currently operating on speculation rather than strategy.

The tactical move for any entity in this space is to aggressively reduce dependency on generalized tokens and invest in a closed-loop system where the AI's output is continuously refined by a specific, proprietary business result. Stop optimizing for "intelligence" and start optimizing for "closed-task completion rates."