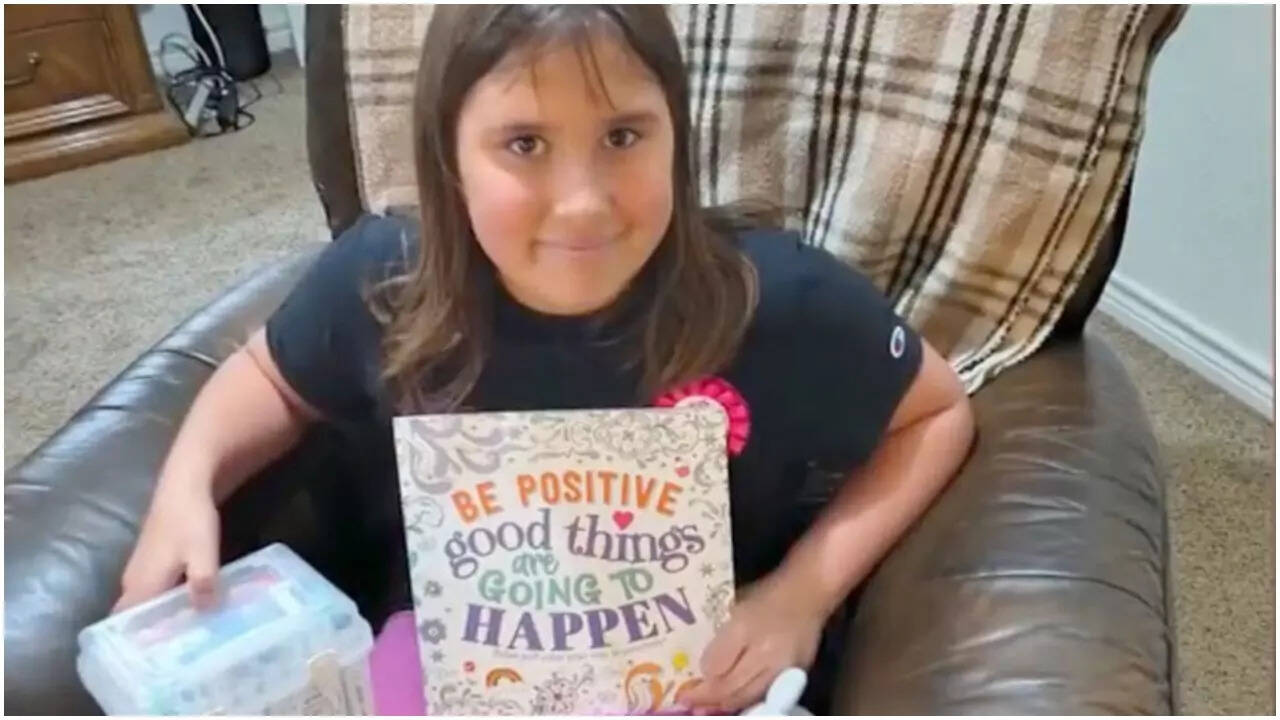

The death of a nine-year-old child seeking online validation is not a freak accident. It is the predictable outcome of an attention economy that treats pre-adolescent neurological development as a data point. When a child dies from the "blackout challenge"—a dangerous dare to self-asphyxiate until losing consciousness—the public outcry usually targets the specific video. This misses the mechanical reality of the platform. The crisis isn't just the content; it is the algorithmic velocity that pushes high-arousal, high-risk behavior to users whose prefrontal cortexes cannot yet process long-term consequences.

Parents of these children aren't fighting a "game." They are fighting an industrial-scale feedback loop. For a nine-year-old, the chemical hit of a "like" or a "view" carries more weight than the abstract threat of oxygen deprivation. The blackout challenge persists because it generates the exact type of engagement that social media systems are built to reward.

The Mechanics of a Digital Death Trap

To understand why a child would tie a ligature around their neck for a camera, you have to look at the engineering of the feed. Algorithms don't have a moral compass. They have an engagement target. If a video of a child holding their breath or using a belt to pass out keeps viewers on the app for three seconds longer than a cartoon, the system will amplify it.

The "blackout challenge" is a rebranding of a much older phenomenon known as the "choking game." In the pre-digital era, this was passed through word-of-mouth on playgrounds. It was contained by geography. Today, the digital version scales at the speed of light. A child in Wisconsin can be influenced by a peer in London within seconds.

There is a fundamental mismatch between the human brain and the machine. By age nine, children are highly attuned to social status but have almost no impulse control. They see a peer achieving "fame" through a challenge and their biology screams for them to replicate it. The platform provides the stage, the audience, and the incentive. The danger is hidden behind the slick production of a short-form video.

Where Content Moderation Fails

Silicon Valley often points to its content moderation teams and AI filters as a shield. They claim to remove 99% of "violating content." This is a statistical sleight of hand. When you deal with billions of uploads, that 1% of failure represents millions of dangerous impressions.

Moderation is reactive. By the time a "blackout" video is flagged and removed, it has already been downloaded, remixed, and re-uploaded by dozens of other accounts. This creates a "Whac-A-Mole" effect where the platform is always one step behind the trend. Furthermore, many of these videos don't use the word "blackout" in the caption. They use coded language, emojis, or trending songs to bypass keyword filters.

The industry’s reliance on "community reporting" is also a flaw. We are asking children—the primary consumers of this content—to be the moral arbiters of what is safe. A ten-year-old isn't going to report a video that looks exciting or popular; they are going to try to emulate it.

The Myth of Parental Controls

The standard corporate response to these tragedies is to suggest better parental supervision. It’s a convenient way to shift liability. However, modern "parental controls" are often just a thin veneer of protection.

- Algorithmic Leakage: Even if a parent sets an account to "restricted," the nature of the "For You" feed means that content can leak through if it hasn't been specifically categorized as adult material.

- The Multi-Device Reality: Children access these platforms on school tablets, friends' phones, and gaming consoles. Monitoring every second of screen time is a logistical impossibility for the average working family.

- The Sophistication Gap: Kids are often more tech-literate than their parents. They know how to clear histories, create "finstas" (fake Instagram accounts), and use VPNs to bypass household filters.

Placing the entire burden of safety on parents is like blaming a driver for a brake failure caused by a manufacturing defect. The product itself is designed to be addictive and self-perpetuating. When the product results in a death, the fault lies in the design, not just the supervision.

The Legal Shield of Section 230

In the United States, tech companies are protected by Section 230 of the Communications Decency Act. This law generally treats platforms as "distributors" rather than "publishers." If a newspaper prints instructions on how to build a bomb, they can be held liable. If a social media platform's algorithm recommends a video on how to self-asphyxiate, they are currently shielded from most lawsuits.

Trial lawyers are now attempting to punch holes in this shield. The new legal strategy focuses on Product Liability. The argument is that the algorithm is not "speech"—it is a feature of a product. If that feature is found to be "defectively designed" because it promotes lethal content to minors, the platforms could finally face financial consequences.

Until the cost of a lawsuit exceeds the profit generated by the engagement, nothing changes. The industry operates on a "growth at all costs" model. Safety is treated as a PR department problem, not an engineering requirement.

The Physical Reality of Asphyxiation

We need to be blunt about what happens during these challenges. This isn't a "faint." It is the rapid deprivation of oxygen to the brain. When a child applies pressure to their carotid artery or uses a ligature, they are inducing a state of hypoxia.

Within seconds, the brain begins to shut down non-essential functions. If the pressure isn't released immediately—which is impossible if the child has lost consciousness while using a fixed ligature—permanent brain damage occurs within minutes. Death follows shortly after.

The "rush" that children seek is actually the feeling of the brain struggling to survive. It is a biological panic masquerading as a high. Because the videos often cut away before the actual collapse, or show the child "waking up" and laughing, the lethal risk is completely erased from the viewer's perspective.

The Industry Analyst’s Verdict

I have watched this cycle for twenty years. A new platform emerges, it ignores safety in favor of rapid user acquisition, a tragedy occurs, and the company issues a statement about being "heartbroken." They promise to "do better" while changing nothing about the underlying code that caused the problem.

We are currently seeing a massive increase in these incidents because the algorithms have become too good at their jobs. They are so efficient at finding what "works" that they have inadvertently weaponized the peer-pressure instincts of the most vulnerable demographic on earth.

The solution isn't another "awareness campaign." It is a fundamental decoupling of engagement metrics from content distribution for users under the age of eighteen. If we don't force a change in how these feeds are curated, we are essentially presiding over a digital lottery where the prize for a few thousand views is a funeral.

Check your child's "Saved" and "Liked" folders today. Don't look for the word "blackout." Look for anything involving breath-holding, fainting, or "passing out" dares. If you find it, don't just delete the app—have a clinical, terrifyingly honest conversation about what happens to a brain without air.

Go to your device settings now and disable "Background App Refresh" and "Autocomplete" for search terms on all social media apps your children use to slow the speed of the discovery engine.