The viral recording of a man resembling Jeffrey Epstein on Florida’s I-95 functions as a case study in the catastrophic failure of decentralized crowdsourced intelligence. When an individual’s physical morphology aligns with the visual memory of a high-profile decedent, the resulting "lookalike effect" triggers a chain reaction of digital confirmation bias that bypasses traditional verification protocols. This event is not a localized anomaly; it is a structural byproduct of a media ecosystem that prioritizes rapid pattern matching over forensic accuracy.

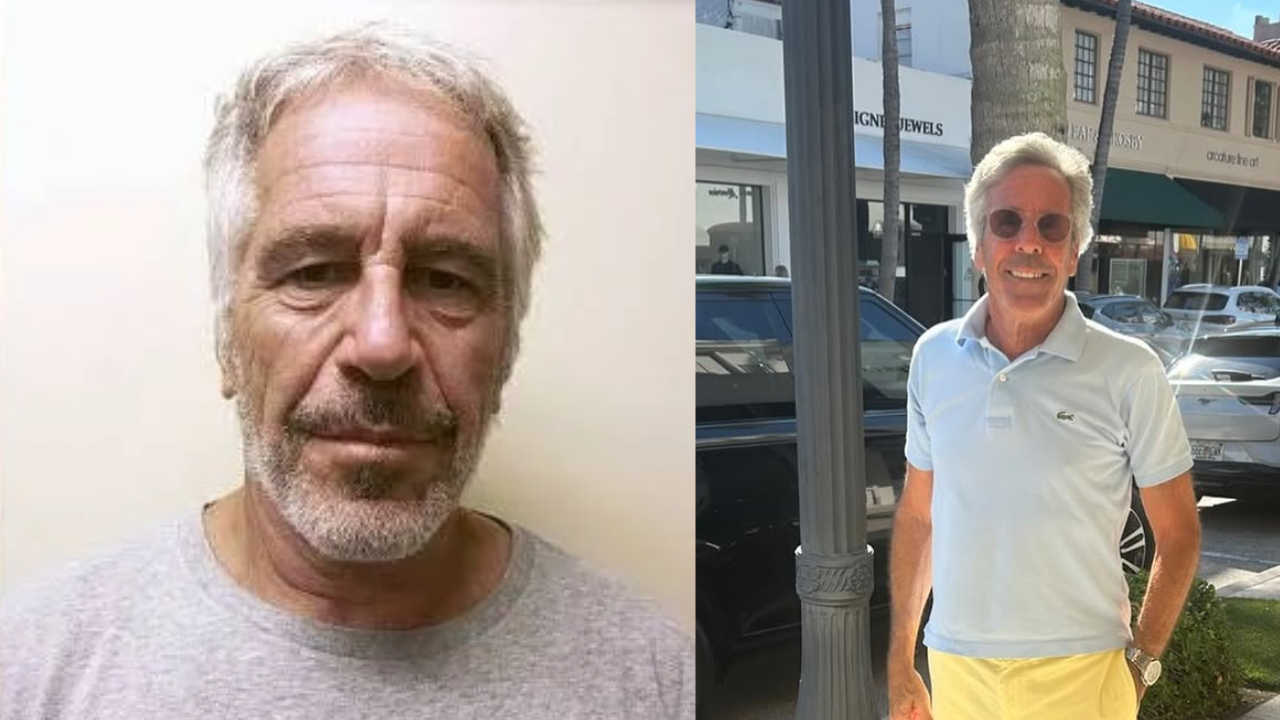

Understanding the trajectory of this specific incident—involving a Florida resident named Pete who was harassed following a roadside encounter—requires deconstructing the variables that transform a mundane visual coincidence into a national security-adjacent conspiracy theory.

The Triad of Viral Misidentification

The transition from a physical sighting to a global digital event relies on three specific operational pillars. Each pillar provides the necessary momentum to override the statistical impossibility of the claim.

1. Visual Anchoring and Facial Recognition Fallacy

Human cognition is wired for pareidolia and facial recognition, but this system is easily exploited by low-resolution video and high-stress framing. In the I-95 footage, the viewer is primed by the uploader’s caption. This priming creates a cognitive anchor; the observer is no longer looking for who the man is, but rather searching for features that confirm he is Epstein. The brain ignores the "noise" (minor anatomical differences) and focuses on "signal" (gray hair, specific glasses, similar facial structure).

2. The Persistence of the Dead-Body Conspiracy

The logic governing this viral event is rooted in the "Incomplete Closure" framework. Because the death of Jeffrey Epstein occurred under controversial circumstances with high-stakes political implications, the public psyche remains in a state of hyper-vigilance. Any visual evidence suggesting his survival acts as a release valve for this unresolved tension. The lookalike becomes a vessel for a pre-existing narrative, making the factual identity of "Pete" irrelevant to the digital mob.

3. Algorithmic Velocity vs. Human Correction

The speed at which the video traversed platforms like X (formerly Twitter) and TikTok created a "verification lag." By the time the subject could provide proof of life and identity, the original video had already achieved a saturation point where the correction reached only a fraction of the initial audience. This creates a permanent digital shadow—a state where the individual is forever linked to the conspiracy in search engine results, regardless of the debunking.

Quantifying the Cost of Anonymity Erosion

For the individual at the center of the storm, the "Cost of Resemblance" is measurable across several dimensions. This is not merely an emotional grievance; it is a systematic degradation of personal security and professional viability.

- Security Overhead: The immediate necessity for private security or police intervention following the doxxing of a vehicle or location.

- Digital Footprint Contamination: The long-term SEO damage where the individual's name becomes secondary to the high-volume search terms of the person they resemble.

- Psychological Attrition: The cognitive load of navigating a world where strangers treat you as a ghost or a criminal based on a 30-second clip.

The Florida incident highlights a specific vulnerability in modern privacy: the "Public Space Liability." In an era of 4K smartphone cameras and ubiquitous high-speed 5G, any presence in a public thoroughfare carries the risk of being uploaded into a global database for real-time analysis.

The Mechanism of Digital Character Assassination

The process follows a rigid, repeatable logic gate:

- The Encounter: A high-contrast visual event occurs (e.g., a man who looks like a billionaire felon is seen at a rest stop).

- The Capturing: Content is recorded with "Narrative Framing"—using text overlays or voiceovers that dictate the conclusion to the viewer.

- The Distribution: Aggregator accounts with high follower counts repost the content to maximize engagement metrics, often using "Just Asking Questions" (JAQ-ing) as a legal shield against defamation.

- The Doxxing: The crowd uses background details (license plates, clothing brands, location tags) to identify the individual.

- The Confrontation: Real-world harassment begins, forcing the subject to "break silence," which paradoxically feeds the news cycle further.

This cycle reveals that the truth is a low-value commodity in the attention economy. The "Pete" in this scenario had to engage in a defensive media campaign just to reclaim the right to his own identity. This is a forced labor of reputation management that the subject never opted into.

Structural Failures in Platform Governance

Social media platforms operate on a "Post-hoc Moderation" model. They rarely intervene until a physical threat is reported, by which time the reputational damage is irreversible. The Epstein lookalike video thrived because it occupied a gray area: it wasn't technically "hate speech," but it functioned as a targeted harassment campaign.

The fundamental bottleneck is the lack of a "Right to Rectification" that operates at the same speed as the "Right to Post." When an individual is misidentified, there is no automated mechanism to link the correction to every instance of the original video. This creates a fragmented reality where different segments of the internet believe two entirely different sets of facts simultaneously.

Navigating the Post-Privacy Reality

The case of the Florida lookalike is a harbinger of a broader trend: the weaponization of "Average Joes." As deepfake technology and high-resolution surveillance merge, the ability to distinguish between a person and their digital or physical double will vanish.

The strategic response for individuals and brands caught in these cycles must be clinical and swift:

- Immediate Identity Assertion: Use a high-authority platform to provide "Proof of Presence" elsewhere or "Proof of Identity" that is impossible to forge (e.g., live-streamed interviews with verified journalists).

- Legal Injunctions against Aggregators: Targeting the original uploader is often a dead end; the focus must be on the mid-tier aggregators who provided the scale for the defamation.

- SEO Counter-Programming: Flooding the digital environment with verified, high-authority content to push the viral incident to the second page of search results.

This incident proves that the greatest threat to personal privacy is no longer state surveillance, but the uncoordinated, algorithmically-driven curiosity of the masses. We have entered an era where being "unremarkable" is no longer a defense, as someone, somewhere, will find a way to map your face onto a monster.

The final move for any person in this position is to transition from a "victim" to a "case study." By standardizing the narrative of the "mistaken identity," the subject can dilute the conspiratorial power of the image. The goal is to move the conversation from "Is that him?" to "Why did we think that was him?" This shifts the focus from the individual's face to the audience's collective gullibility, effectively neutralizing the threat by making the participants in the viral event the subjects of the critique.